Robot Vision (and action)!

Hello Gemini Robotics! Google surprised me a couple weeks ago with some cool tech. I’m watching carefully for the events that get AI into hardware and Vision Language Action has a lot of promise in creating more general and adaptable robots. It’s a good name — intelligence that integrates vision, language, and action—allowing robots to understand natural language commands and execute complex tasks in dynamic environments. This represents a move away from narrowly focused, task-specific systems toward robots that can generalize skills across different scenarios.

Google’s PaLM-E debuted in 2023 as one of the first attempts to extend large language models into the robotics domain, using a multimodal setup (text and vision) to instruct simple robot behaviors. While groundbreaking for its time, PaLM-E’s capabilities were limited in terms of task complexity and real-world robustness. Fast-forward to 2025, and Gemini Robotics takes these ideas much further by harnessing the significantly more powerful Gemini 2.0 foundation model. In doing so, Gemini’s Vision-Language-Action (VLA) architecture not only understands language and visual inputs, but also translates them into real-time physical actions. According to DeepMind’s official blog post—“Gemini Robotics brings AI into the physical world”—this new system enables robots of virtually any shape or size to perform a wide range of tasks in dynamic environments, effectively bridging the gap between large-scale multimodal reasoning and real-world embodied intelligence in a way that PaLM-E never could.

However, while its potential is transformative, there are still challenges to overcome in trust and safety, dealing with hardware constraints, and fine-tuning physical actions that might not perfectly align with human intuition.

Introduction

Google DeepMind’s Gemini Robotics is pretty cool. It’s an AI model designed to bring advanced intelligence into the physical world of robots. Announced on March 12, 2025, it represents the company’s most advanced vision-language-action (VLA) system, meaning it can see and interpret visual inputs, understand and generate language, and directly output physical actions. Built on DeepMind’s powerful Gemini 2.0 large language model, the Gemini Robotics system is tailored for robotics applications, enabling machines to comprehend new situations and perform complex tasks in response to natural language commands. This model is a major step toward “truly general purpose robots,” integrating multiple AI capabilities so robots can adapt, interact, and manipulate their environment more like humans do.

Origins and Development of Gemini Robotics

The development of Gemini Robotics is rooted in Google and DeepMind’s combined advances in AI and robotics over the past few years. Google had long viewed robotics as a “helpful testing ground” for AI breakthroughs in the physical realm. Early efforts laid the groundwork: for example, Google researchers pioneered systems like PaLM-SayCan, which combined language models with robotic planning, and PaLM-E, a multimodal embodied language model, hinted at the potential of integrating language understanding with robotic control. In mid-2023, Google DeepMind introduced Robotics Transformer 2 (RT-2), a VLA model that learned from both web data and real robot demonstrations and could translate knowledge into generalized robotic instructions. RT-2 showed improved generalization and rudimentary reasoning – it could interpret new commands and leverage high-level concepts (e.g. identifying an object that could serve as an improvised tool) beyond its direct training. However, RT-2 was limited in that it could only repurpose physical movements it had already practiced; it struggled with fine-grained manipulations it hadn’t explicitly learned.

Gemini Robotics builds directly on these foundations, combining Google’s latest large language model advances with DeepMind’s robotics research. It is built on the Gemini 2.0 foundation model, which is a multimodal AI capable of processing text, images, and more. By adding physical actions as a new output modality to this AI, the team created an agent that can not only perceive and talk, but also act in the real world. The project has been led by Google DeepMind’s robotics division (headed by Carolina Parada) and involved integrating multiple research breakthroughs. According to DeepMind, Gemini Robotics and a companion model called Gemini Robotics-ER were concurrently developed and launched as “the foundation for a new generation of helpful robots”. The models were primarily trained using data from DeepMind’s bi-arm robot platform ALOHA 2 (a dual-armed robotic system introduced in 2024). Early testing was focused on this platform, but the system was also adapted to other hardware – demonstrating control of standard robot arms (Franka Emika robots common in labs) and even a humanoid form (the Apollo robot from Apptronik). This cross-pollination of Google’s large-scale AI (Gemini 2.0) with DeepMind’s embodied AI research culminated in Gemini Robotics, which was formally unveiled in March 2025. Its development reflects a convergence of trends: ever-larger and more general AI models, and the push to make robots more adaptive and intelligent in uncontrolled environments.

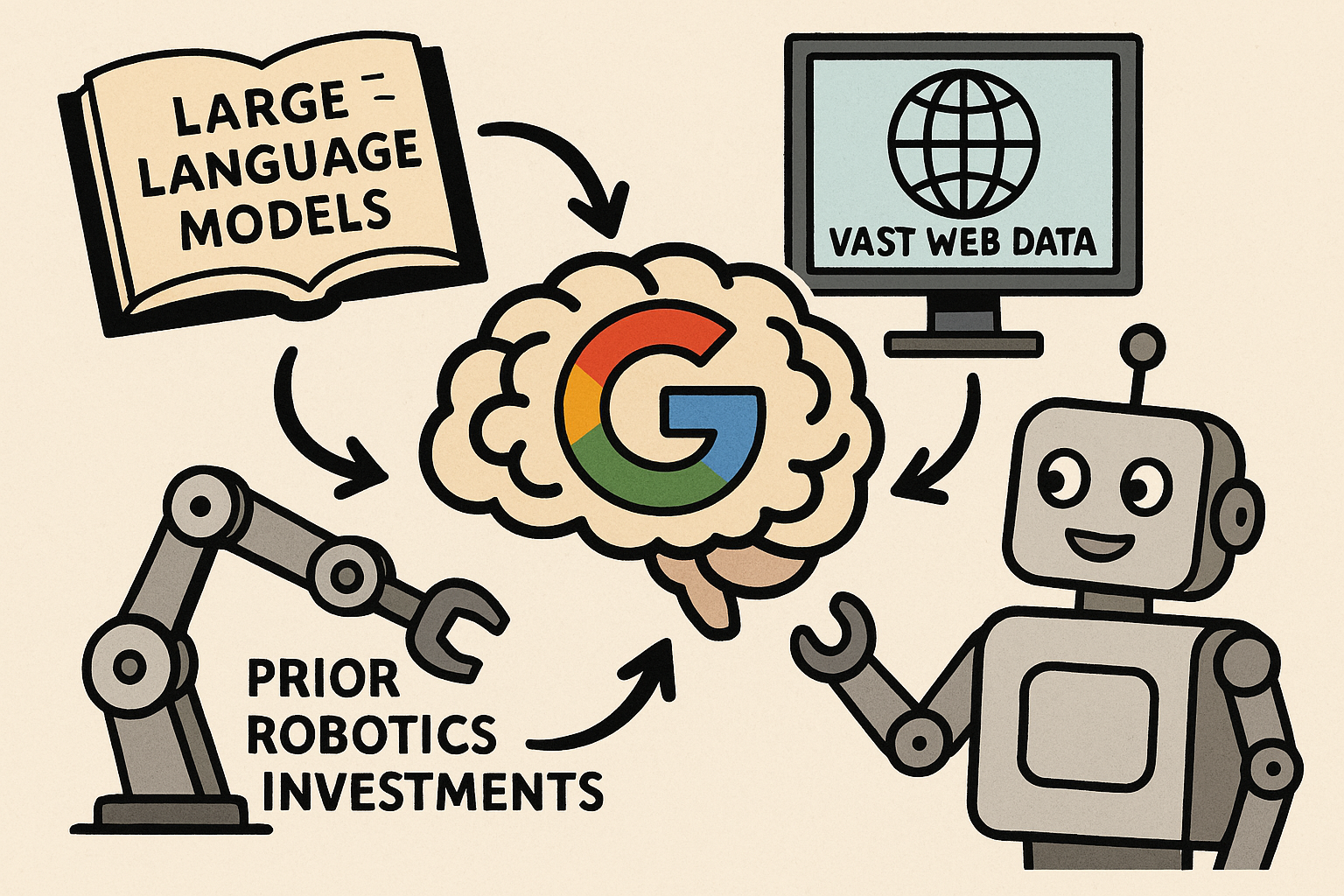

Google DeepMind’s announcement comes amid a “race to make robots useful” in the real world, with several companies striving for similar breakthroughs. Notably, just a month prior, robotics startup Figure AI ended a collaboration with OpenAI after claiming an internal AI breakthrough for robots – underscoring that multiple players are working toward general robotic intelligence (awesome). Within this context, Gemini Robotics emerged as one of the most ambitious efforts, leveraging Google’s unique assets (massive language models, vast web data, and prior robotics investments) to create a generalist “brain” for robots.

The Vision-Language-Action (VLA) Model Explained

At the heart of Gemini Robotics is its vision-language-action (VLA) architecture, which seamlessly integrates perception, linguistic reasoning, and motor control. In practical terms, the model can take visual inputs (such as images or camera feeds of a scene), along with natural language inputs (spoken or written instructions), and produce action outputs that drive a robot’s motors or high-level control system. This tri-modal integration allows a robot equipped with Gemini to perceive its surroundings, understand what needs to be done, and then execute the required steps – all in one fluid loop.

Under the hood, Gemini Robotics leverages the powerful representation and reasoning capabilities of the Gemini 2.0 LLM to interpret both the visual scene and the instruction. The visual input is processed by advanced vision models (DeepMind has indicated that PaLI-X and related vision transformers were adapted as part of earlier systems like RT-2, enabling the AI to recognize objects and understand spatial relationships in the camera view. This is paired with Gemini’s language understanding, which can comprehend instructions phrased in everyday, conversational language – even in different wording or multiple languages. By fusing these inputs, the model forms an internal plan or representation of what action to take. The crucial innovation is that the output is not text, but action commands: Gemini Robotics generates a sequence of motor control tokens or low-level instructions that directly control the robot’s joints and grippers. These action outputs are encoded similarly to language tokens – for example, a series of numeric tokens might represent moving a robotic arm to certain coordinates or applying a specific force. This design treats actions as another “language” for the AI to speak.

An intuitive example of how the VLA model works is the task: “Pick up the banana and put it in the basket.” Given this spoken command, a Gemini-powered robot will use its camera to scan the scene, recognize the banana and the basket (drawing on its visual training), understand the instruction and the desired outcome, and then generate the motor actions to reach out, grasp the banana, and drop it into the basket. All of this can occur without hard-coding specific moves for “banana” or “basket” – the model’s general vision-language knowledge enables it to figure it out. Another striking example demonstrated by Google DeepMind was: “Fold an origami fox.” Without having been explicitly trained on that exact task, the system was able to combine its knowledge of origami (learned from text or images during pre-training) with its ability to control robot hands, successfully folding a paper into the shape of a fox. This showcased how Gemini’s world knowledge can translate into physical skill, even for tasks the robot has never performed before. The VLA architecture effectively allows the robot to generalize concepts to new actions – it “understands” what folding a fox entails and can execute the fine motor steps to do so, all guided by a single natural-language instruction.

Technical Functionality and Capabilities

Gemini Robotics is engineered around three key capabilities needed for versatile robots: generality, interactivity, and dexterity. In each of these dimensions, it represents a leap over previous systems, inching closer to the vision of a general-purpose helper robot.

- Generality and World Knowledge: Because it is built atop the Gemini 2.0 model (which was trained on vast internet data and encodes extensive commonsense and factual knowledge), Gemini Robotics can handle a wide variety of tasks and adapt to new situations on the fly. It leverages “Gemini’s world understanding to generalize to novel situations,” allowing it to tackle tasks it never saw during training. For example, researchers report that the model-controlled robot could perform a “slam dunk” with a toy basketball into a mini hoop when asked – despite never having seen anything related to basketball in its robot training data. The robot’s foundation model knew what a basketball and hoop are and what “slam dunk” means conceptually, and it could connect those concepts to actual motions (picking up the ball and dropping it through the hoop) to satisfy the command. This kind of cross-domain generalization – applying knowledge from one context to a new, embodied scenario – is a hallmark of Gemini Robotics. In quantitative terms, Google DeepMind noted that Gemini Robotics more than doubled performance on a comprehensive generalization benchmark compared to prior state-of-the-art VLA models. In other words, when tested on tasks, objects, or instructions outside its training distribution, it succeeded more than twice as often as earlier models. This suggests a significant improvement in robustness to novel situations.

- Interactivity and Adaptability: Gemini Robotics is designed to work collaboratively with humans and respond dynamically to changes in the environment. Thanks to its language proficiency, the robot can understand nuanced instructions and even follow along in a back-and-forth dialogue if needed. The model can take instructions given in different ways. Crucially, it also demonstrates real-time adaptability: it “continuously monitors its surroundings” and can adjust its plan if something changes mid-task. An example shown by researchers involved a robot told to place a bunch of grapes into a specific container. Midway through, a person shuffled the containers around on the table. Gemini’s controller tracked the target container as it moved and still completed the task, successfully following the correct container in a game of three-card monte on the fly. This shows a level of situational awareness and reactivity that previous static plans would fail at. If an object slips from the robot’s grasp or is moved by someone, the model can quickly replan its actions and continue appropriately. In practical terms, this makes the robot much more resilient in unstructured, dynamic settings like homes or workplaces, where surprises happen regularly. Users can also update instructions on the fly – for instance, interrupt a task with a new command – and Gemini will smoothly transition, a quality of “steerability” that is important for human-robot collaboration.

- Dexterity and Physical Skills: A standout feature of Gemini Robotics is its level of fine motor control. Many everyday tasks humans do – buttoning a shirt, folding paper, handling fragile objects – are notoriously hard for robots because they require precise force and coordination. Gemini Robotics has demonstrated advanced dexterity by tackling multi-step manipulation tasks that were previously considered out of reach for robots without extensive task-specific programming. In tests, its dual-armed system could fold an origami crane, pack a lunchbox with assorted items, carefully pick a single card from a deck without bending others, and even seal snacks in a Ziploc bag. These examples illustrate delicate handling and coordination of two arms/hands – akin to human bimanual tasks. Observers noted that this capability appears to “solve one of robotics’ biggest challenges: getting robots to turn their ‘knowledge’ into careful, precise movements in the real world”. It’s important to note, however, that some of the most intricate feats were achieved in the context of tasks the model was trained on with high-quality data, meaning the robot had practice or human-provided demonstrations for those specific skills. As IEEE Spectrum reported, the impressive origami performance doesn’t yet mean the robot will generalize all dexterous skills – rather, it shows the upper bound of what the system can do when given focused training on a fine motor task. Still, compared to prior systems that could barely grasp a single object reliably, Gemini’s leap to complex manipulation is a significant advancement.

- Multi-Embodiment and Adaptability to Hardware: Another technical strength of Gemini Robotics is its ability to work across different robot form factors. Rather than being tied to one specific robot model or configuration, the Gemini AI was trained in a way that makes it adaptable to “multiple embodiments”. DeepMind demonstrated that a single Gemini Robotics model could control both a fixed bi-arm platform and a humanoid robot with very different kinematics. The team trained primarily on their ALOHA 2 twin-arm system, but also showed the model could be specialized to operate the Apptronik “Apollo” humanoid – which has arms, hands, and a torso – to perform similar tasks in a human-like form. This hints at a future where one core AI could power many kinds of robots, from warehouse picker arms to home assistant humanoids, with minimal additional training for each. Google has in fact partnered with Apptronik and other robotics companies as trusted testers to apply Gemini in different settings. The generalist nature of the model means it encodes abstract skills that can transfer to new bodies. Of course, some calibration is needed for each hardware – the system might need to learn the dynamics and constraints of a new robot – but it doesn’t need to learn the task from scratch. This is a big efficiency gain: historically, each new robot or use-case required building an AI model almost from zero or collecting a trove of demonstrations, whereas Gemini provides a strong starting point.

- Embodied Reasoning (Gemini Robotics-ER): Alongside the main Gemini Robotics model, Google DeepMind introduced Gemini Robotics-ER (with “ER” standing for Embodied Reasoning). This variant emphasizes enhanced spatial understanding and reasoning about the physical world, and it is intended as a tool for roboticists to integrate with their own control systems. Gemini Robotics-ER can be thought of as a vision-language model with an intuitive grasp of physics and geometry – it can look at a scene and infer things like object locations, relationships, or potential affordances. For instance, if shown a coffee mug, the model can identify an appropriate grasping point (the handle) and even plan a safe approach trajectory for picking it up. It marries the perception and language skills of Gemini with a sort of built-in physics engine and even coding abilities: the model can output not only actions but also generated code or pseudo-code to control a robot, which developers can hook into low-level controllers. In tests, Gemini-ER could perform an end-to-end robotics pipeline (perception → state estimation → spatial reasoning → planning → control commands) out-of-the-box, achieving 2-3× higher success rates on certain tasks compared to using Gemini 2.0 alone. A striking feature is its use of in-context learning for physical tasks – if the initial attempt isn’t sufficient, the model can take a few human-provided demonstrations as examples and then improve or adapt its solution accordingly. Essentially, Robotics-ER acts as an intelligent perception and planning module that can be plugged into existing robots, complementing the primary Gemini Robotics policy which directly outputs actions. By separating out this embodied reasoning component, DeepMind allows developers to leverage Gemini’s spatial intelligence even if they prefer to use their own motion controllers or safety constraints at the execution layer. This two-model approach (Gemini Robotics and Robotics-ER) gives flexibility in deployment – one can use the full end-to-end model for maximum autonomy, or use the ER model alongside manual programming to guide a robot with high-level smarts.

- Safety Mechanisms: Given the high level of autonomy Gemini Robotics enables, safety has been a paramount concern in its functionality. The team implemented a layered approach to safety, combining traditional low-level safety (e.g. collision avoidance, not exerting excessive force) with higher-level “semantic safety” checks. The model evaluates the content of instructions and the consequences of actions before executing them, especially in the Robotics-ER version which was explicitly trained to judge whether an action is safe in a given scenario. For example, if asked to mix chemicals or put an object where it doesn’t belong, the AI should recognize potential hazards. DeepMind’s head of robotic safety, Vikas Sindhwani, explained that the Gemini models are trained to understand common-sense rules about the physical world – for instance, knowing that placing a plush toy on a hot stove or combining certain household chemicals would be unsafe. To benchmark this, the team introduced a new “Asimov” safety dataset and benchmark (named after Isaac Asimov, who famously penned robot ethics rules) to test AI models on their grasp of such common-sense constraints. The Gemini models reportedly performed well, answering over 80% of the safety questions correctly. This indicates the model usually recognizes overtly dangerous instructions or outcomes. Additionally, Google DeepMind has implemented a set of governance rules (“robot constitution”) for the robot’s behavior, inspired by Asimov’s Three Laws of Robotics, which were expanded into the ASIMOV dataset to train and evaluate the robot’s adherence to ethical guidelines. For instance, rules might include not harming humans, not damaging property, and prioritizing user intent while obeying safety constraints. These guardrails, combined with ongoing human oversight (especially during testing phases), aim to ensure the powerful capabilities of Gemini Robotics are used responsibly and do not lead to unintended harm.

Is Gemini Robotics the Future of Robotics?

So are we done? Time for our robot overlords? The introduction of Gemini Robotics has sparked debate about whether large VLA models like this represent the future of robotics – a future where robots are generalists rather than specialists, and where intelligence is largely driven by massive pretrained AI models. Google DeepMind certainly positions Gemini as a game-changer, stating that it “lays the foundation for a new generation of helpful robots” and touting its success on previously impossible tasks. Many experts see this as a natural evolution: as AI systems have achieved human-level (and beyond) performance in language and vision tasks, it was inevitable that those capabilities would be extended to physical machines. Generative AI models are getting closer to taking action in the real world, notes IEEE Spectrum, and the big AI companies are now introducing AI agents that move from just chatting or analyzing images to actually manipulating objects and environments. In this sense, Gemini Robotics does appear to be a glimpse of robotics’ likely trajectory – one where embodied AI is informed by vast prior knowledge and can be instructed as easily as talking to a smart speaker. The ability to simply tell a robot what to do in natural language (e.g. “please tidy up the kitchen” or “assemble this Ikea chair”) and have it figure out the steps is a long-standing dream in robotics, and Gemini’s demos suggest we are closer than ever.

Proponents argue that such generalist models are necessary to break robotics out of its current limits. Up to now, most robots excel only in narrow domains (an industrial arm can repeat one assembly task all day, a Roomba can vacuum floors but do nothing else, etc.). To be broadly useful in human environments, robots must deal with endless variability – new objects, unforeseen situations, and evolving human instructions. Hard-coding every contingency or training a custom model for every task is impractical. Therefore, an AI that can leverage world knowledge and reason on the fly is needed. Google’s team emphasizes that all three qualities – generalization, adaptability, dexterity – are “necessary… to create a new generation of helpful robots” that can actually operate in our homes and workplaces. In other words, without an AI like Gemini, robots would remain too rigid or labor-intensive to program for the messy real world. By giving robots a “common sense” understanding of the world and the ability to learn tasks from description, Gemini Robotics indeed could be a cornerstone for future robotics platforms.

That said, whether Gemini’s approach is the future or just one branch is still a matter of discussion. Some robotics researchers caution against over-relying on massive pretrained models. One limitation observed even in Gemini is that its human-like biases may not always yield optimal physical decisions. For example, Gemini-ER identified the handle of a coffee mug as the best grasp point – because in human data, people hold mugs by handles – but for a robot hand, especially one immune to heat, grabbing the sturdy body of the mug might actually be more stable than the thin handle. This shows that the AI’s “common sense” is derived from human norms, which isn’t always ideal for a machine’s capabilities. Overcoming such discrepancies may require integrating pure learning from trial and error or additional physical reasoning beyond internet-based knowledge. Additionally, the most dexterous feats Gemini achieved were ones it specifically trained for with high-quality demonstrations. This indicates that while the model is general, it still benefits from practice on complex tasks – true one-shot generalization to any arbitrary manipulation is not fully solved. In essence, Gemini Robotics might be necessary but not sufficient for the future of robotics: it provides the intelligence and adaptability, but it must be paired with continued advances in robot hardware and in task-specific learning.

Another consideration is the computational complexity. Gemini 2.0 (the base model) is extremely large, and running such models in real-time on a robot can be challenging. Currently, much processing might happen on cloud servers or off-board computers. The future of robotics may depend on making these models more efficient (something Google is also addressing with smaller variants like “Gemma” models for on-device AI. The necessity of a model like Gemini might also depend on the application: for a general home assistant or a multipurpose factory robot, a broad intelligence is likely crucial. But for simpler, well-defined tasks (say, a robot that just sorts packages), a smaller specialized AI might suffice more economically. Therefore, we might see a hybrid future – in high-complexity domains, Gemini-like brains lead the way, whereas simpler robots use more lightweight solutions. Nonetheless, the techniques and learnings from Gemini (like treating actions as language tokens, and using language models for planning) are influencing the entire field. Competing approaches will likely incorporate similar ideas of multi-modal learning and large-scale pre-training because of the clear gains in capability that Gemini and its predecessors have demonstrated. In summary, Gemini Robotics does appear to be a harbinger of robotics’ future, providing a path to move beyond single-purpose machines. Its advent underscores a paradigm shift where generalist AI systems control robots – a notion that until recently belonged to science fiction – and it illustrates both the promise and the remaining challenges on the road to truly adaptive, helpful robots.

Comparisons to Alternative Approaches in Robotic AI

Gemini Robotics’s approach can be contrasted with several other strategies in robotic AI, each with its own philosophy and trade-offs. Below, we compare it to some notable alternatives:

- Modular Robotics (Traditional Pipeline): The classic approach to robot intelligence breaks the problem into separate modules: perception (computer vision to detect objects, SLAM for mapping), decision-making (often rule-based or using a planner), and low-level control (path planning and motor control). In such systems, there isn’t a single monolithic AI “brain” – instead, engineers program each component and often use specific AI models for each (like a convolutional network for object recognition, a motion planner for navigation, etc.). The advantage is reliability and predictability; each module can be tuned and verified. However, the drawback is rigidity – the robot can only do what its modules were explicitly designed for. Adding new tasks requires significant re-engineering. In contrast, Gemini Robotics is more of an end-to-end learned system. It attempts to handle perception, reasoning, and action generation in one model (or at least with tightly integrated learned components). This holistic approach allows for more flexibility – e.g. interpreting an unfamiliar instruction and devising a behavior – which a modular system might not handle if not pre-programmed. Of course, Gemini still relies on some low-level controllers (for fine motion, safety constraints), but the boundary between modules is blurred compared to the traditional pipeline. Essentially, Gemini trades some transparency for vastly greater adaptability. As robots move into unstructured environments, this trade is often seen as worthwhile, because no team of engineers can anticipate every scenario the way a large trained model can generalize.

- Reinforcement Learning and Imitation Learning: Another approach is to teach robots via trial and error (RL) or by imitating human demonstrations, training a policy neural network for specific tasks. OpenAI famously used reinforcement learning plus human teleoperation demos to train a robot hand to solve a Rubik’s Cube, and Boston Dynamics uses a mix of planning and learned policies for locomotion. These systems can achieve remarkable feats, but they are typically narrow in scope – the policy is an expert at one task or a set of related tasks. Gato, a 2022 DeepMind model, was an attempt at a multi-task agent (it learned from dozens of different tasks, from playing Atari games to controlling a robot arm), but even Gato had a fixed set of skills defined by its training data. Gemini Robotics differs by leveraging pretrained knowledge: rather than learning from scratch via millions of trials, it starts with a rich base of semantic and visual understanding from web data. This gives it a form of “common sense” and language ability out-of-the-box that pure RL policies lack. In essence, Gemini moves the needle from “learning by doing” to “learning by reading/seeing” (plus some doing). That means a Gemini-based robot might execute a new task correctly on the first try because it can reason it out, whereas an RL-based robot would likely flail until it accumulates enough reward feedback. The flip side is that RL can sometimes discover unconventional but effective strategies (since it isn’t constrained by human priors), and it can optimize for physical realities (dynamics, wear and tear) if given direct experience. A future approach might combine both: use models like Gemini for high-level reasoning and understanding, but allow some reinforcement fine-tuning on the robot itself to refine the motions for efficiency or reliability. In fact, fine-tuning Gemini on robotic data (like how RT-2 was fine-tuned on real robot experiences is exactly what Google did, and further RL training could be a next step beyond the initial demo capabilities.

- Other Foundation Model Approaches: Google is not alone in applying foundation models to robotics. Competitors and research labs are pursuing similar visions. For example, OpenAI has been exploring ways to connect its GPT-4 model to robotics (though no official product has been announced, internal experiments and partnerships have been noted). The startup Figure AI, which is building humanoid robots, initially partnered with OpenAI to leverage GPT-style intelligence, but as Reuters reported, Figure decided to develop its own model after making an internal breakthrough. This suggests that a number of players are working on language-model-driven robotics, each with their own twist. Another example is Microsoft and Google’s PaLM-E (embodied language model) which was a precursor integrating a vision encoder with a language model to output robot actions. Meta (Facebook) has also done research on combining vision, language, and action – though they’ve been quieter in announcing a unified model, they have components like visual question answering and world model simulators that could feed into robotics. There is also a distinction in whether the model is end-to-end or generates code for the robot. Some approaches have the AI output code (in Python or a robot API) which is then executed by a lower system – this can be safer in some cases, as the code can be reviewed or sandboxed. Gemini-ER’s ability to produce code for robot control falls into this category. Companies like Adept AI take a similar approach for software automation (outputting code from instructions); applied to robotics, one could imagine an AI that writes a script for the robot (e.g. using a library like ROS – Robot Operating System – to move joints) rather than directly controlling every torque. The drawback is that if the situation deviates, the code might not handle it unless the AI is looped in to rewrite it. Gemini’s direct policy is more fluid, but code-generation approaches offer interpretability.

In comparison to these alternatives, Gemini Robotics distinguishes itself by its scale of general knowledge and its demonstrated range of capabilities without task-specific training. A Tech Review article headline concisely states it “uses Google’s top language model to make robots more useful,” implying that plugging in this very large pre-trained brain yields a robot that can do far more than ones programmed with only task-specific data. Moreover, Gemini was shown to operate “with minimal training” for new tasks – many instructions it can execute correctly the first time, thanks to its prior knowledge. This sets it apart from typical RL or modular systems which often need extensive data or engineering for each new behavior. However, it’s worth noting that alternative approaches are converging. For instance, Robotics Transformer (RT-2), while smaller in scope, was already a VLA model and Gemini is essentially the next evolution. So rather than completely different paradigms, we are seeing a merging: classical robotics brings the safety and control expertise, while new AI brings flexibility. The future likely involves integrating these approaches – indeed Google’s strategy with Gemini-ER is to let developers use their own controllers alongside the AI, marrying learned reasoning with trusted control algorithms.

From an industry perspective, the availability of models like Gemini Robotics can level the playing field for robotics companies. Instead of needing a large AI team to build a robot’s brain from scratch, startups can leverage a frontier model (via API or licensing) and focus on hardware and specific applications. Google asserts that such models can help “cash-strapped startups reduce development costs and increase speed to market” by handling the heavy AI lifting. Similar services may be offered by cloud providers (e.g. Nvidia’s ISAAC system, etc.). In contrast, some companies might prefer to develop their own AI to avoid dependency – as seen by Figure’s move away from OpenAI. Competitors like Tesla are also working on general-purpose humanoid robots (Tesla’s Optimus) but with a philosophy leaning on vision-based neural networks distilled from human demonstrations (as per Tesla’s AI Day presentations) – an approach less language-oriented than Gemini’s, at least publicly. It remains to be seen which approach yields better real-world performance and safety.

In summary, Gemini Robotics exemplifies the large-scale, knowledge-rich, end-to-end learning approach to robotic AI. Alternative approaches exist on a spectrum from hand-engineered to learning-based, and many are gradually incorporating more AI-driven generality. The consensus in the field is that to achieve human-level versatility in robots, some form of massive, generalist AI is required – whether it’s exactly Gemini or something akin to it. As of now, Gemini Robotics stands at the cutting edge, but it will no doubt inspire both open-source and commercial efforts that use similar techniques to push robotics forward.

Gemini Robotics represents a bold leap forward in the quest to endow robots with general intelligence and adaptability. By fusing vision, language, and action, it allows machines to see the world, reason about it with human-like understanding, and act to carry out complex tasks – all guided by natural language. The origins of this model lie in years of incremental progress (from multi-modal models to robotic transformers) that have now converged into an embodied AI agent unlike any before. Technically, Gemini’s VLA model demonstrates unprecedented capabilities: it can generalize knowledge to new situations, interact fluidly with humans and dynamic environments, and perform fine motor skills once thought too difficult for robots without extensive programming. These achievements inch us closer to the long-envisioned general-purpose robot helper.

Yet this is not an endpoint but a starting point for the next era of robotics. Gemini Robotics does appear to chart the future: a future where intelligent robots can be taught with words and examples, not just hard-coded instructions, and where they can work alongside people in everyday environments. Its approach is likely to influence most robotic AI strategies going forward, whether through direct usage or through the adoption of similar large-model techniques by others. At the same time, the necessity of such powerful AI in robots also brings into focus the responsibilities that come with it. Ensuring safety, aligning actions with human values, and considering the societal impact are as integral to this future as the algorithms themselves.

In comparing Gemini to other approaches, we see that while alternative methods exist (from classical robotics to reinforcement learning), the field is coalescing around the idea that scale and generality in AI are key to cracking the hardest robotics challenges. Gemini’s current edge in performance (reportedly doubling previous systems on generalization benchmarks) validates this direction. But it also highlights that cooperation between human engineers and AI will remain crucial – whether for fine-tuning dexterous skills, implementing safety constraints, or adapting the model to new robot hardware. The “brain” may be general, but the implementation details and guarantees still require human ingenuity and caution.

Fun Stuff for more:

- Google DeepMind – “Gemini Robotics brings AI into the physical world” (Blog post, Mar 12, 2025) (Gemini Robotics brings AI into the physical world – Google DeepMind) (Gemini Robotics brings AI into the physical world – Google DeepMind)

- Reuters – “Google introduces new AI models for rapidly growing robotics industry” (Mar 12, 2025) (Google introduces new AI models for rapidly growing robotics industry | Reuters) (Google introduces new AI models for rapidly growing robotics industry | Reuters)

- Wired – “Google’s Gemini Robotics AI Model Reaches Into the Physical World” by Will Knight (May 2025) (Gemini Robotics – Wikipedia)

- IEEE Spectrum – “With Gemini Robotics, Google Aims for Smarter Robots” by Eliza Strickland (Mar 12, 2025) (IEEE News – Short cycle degree in Software Development) (IEEE News – Short cycle degree in Software Development)

- Ars Technica – “Google’s new robot AI can fold delicate origami, close zipper bags…” by Benj Edwards (Mar 12, 2025) (Alterslash, the unofficial Slashdot digest) (Alterslash, the unofficial Slashdot digest)

- MIT Technology Review – “Gemini Robotics uses Google’s top language model to make robots more useful” by Scott J. Mulligan (Mar 12, 2025) (AITopics | Gemini Robotics uses Google’s top language model to make robots more useful) (AITopics | Gemini Robotics uses Google’s top language model to make robots more useful)

- Digital Trends – “Gemini might soon drive futuristic robots that can do your chores” by Patrick Hearn (Mar 12, 2025) (Gemini might soon drive futuristic robots that can do your chores | Digital Trends) (Gemini might soon drive futuristic robots that can do your chores | Digital Trends)

- Google DeepMind – “RT-2: New model translates vision and language into action” (Blog post, 2023) (RT-2: New model translates vision and language into action – Google DeepMind) (RT-2: New model translates vision and language into action – Google DeepMind)

- Google DeepMind – Gemini Robotics Official Page (Gemini Robotics – Google DeepMind) (Gemini Robotics – Google DeepMind)

- Google DeepMind – Gemini Robotics Technical Report (2025) (Gemini Robotics brings AI into the physical world – Google DeepMind) (Gemini Robotics brings AI into the physical world – Google DeepMind)

- Others (Nature, Gizmodo, CNBC summaries) for additional context (Techmeme: Google DeepMind launches two AI models, Gemini 2.0-based Robotics and Robotics-ER, to help robots “perform a wider range of real-world tasks than ever before” (Emma Roth/The Verge)) (Techmeme: Google DeepMind launches two AI models, Gemini 2.0-based Robotics and Robotics-ER, to help robots “perform a wider range of real-world tasks than ever before” (Emma Roth/The Verge)).

Feedback

One comment on “Robot Vision (and action)!”